In the early days of the digital age, we were obsessed with "speed." We wanted to know how many megabits per second our providers could squeeze through the lines. But as we move through 2026, the conversation has shifted. Whether you’re trying to land a remote job from a cabin in the Northwoods or you’re a rural gamer tired of seeing "Connection Lost" on your screen, you’ve likely realized that speed is only half the battle. The other half—and arguably the more important half for modern applications—is latency.

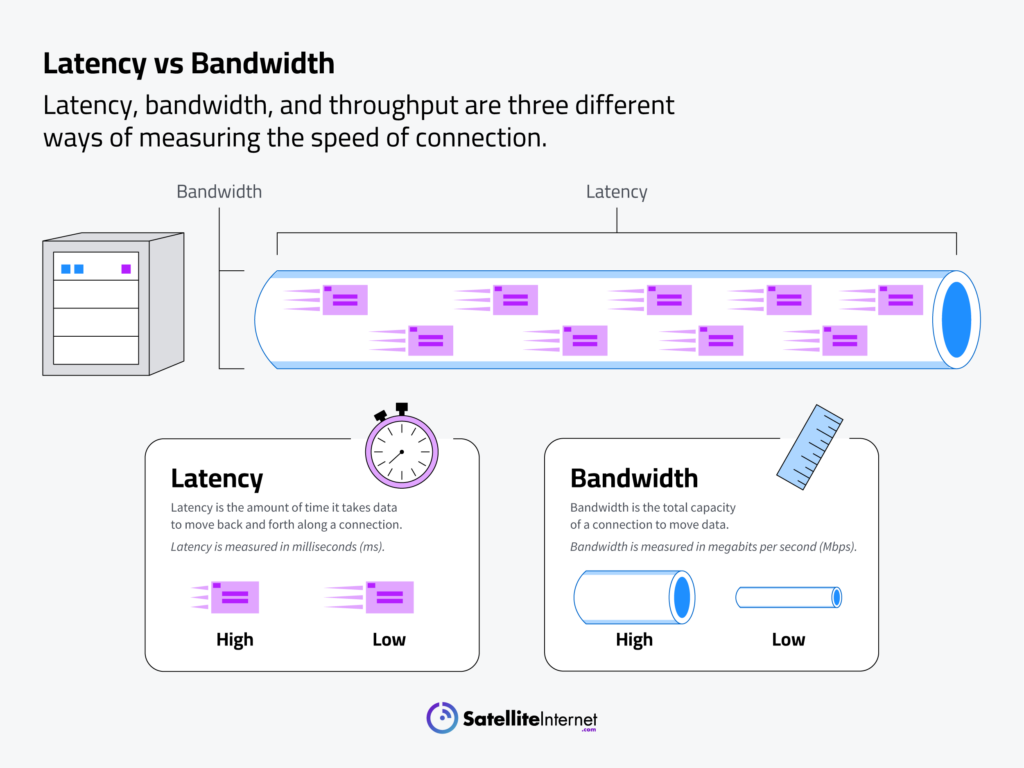

Latency, often called "ping," is the heartbeat of your internet connection. It represents the literal time it takes for a signal to travel from your device to a server and back again. While bandwidth tells you how much data can fit through the pipe, latency tells you how fast that data can actually make the round trip. In a world where we rely on real-time video conferencing, cloud-based productivity tools, and low-latency gaming, a high-speed connection with high latency feels like driving a Ferrari through a series of endless stoplights. You might have the power, but you aren’t getting anywhere quickly.